This blog post was shared as a talk at AWS re:Invent in December 2021. Enjoy the video here.

As a part of elevating the mobile developer experience at Goldman Sachs, we wanted to modernize how we build and deliver mobile applications. Our goal was to provide a system that would build code, run tests, package, and deliver applications to the Google Play Store or Apple App Store. The current system has evolved over time. It consists of Mac Minis and Mac Pros in data centers that are setup and managed by our IT team. There are different sets of machines dedicated to build, sign, and upload to either of the app stores, each of which has its own pipeline/process. Software on these machines has been installed or upgraded using a manual management solution. Adding capacity or upgrading hardware could take weeks to months. We sought to build a solution that would be fully automated, from procuring hardware, to delivering distribution-signed applications to the Google Play Store or Apple App Store.

Macs are an essential part of a CI/CD solution for Apple platforms. We needed a solution that would allow us to add, remove, and manage Macs remotely. Our mobile application codebase resides on GitLab, which supports the CI/CD workflow and pipeline by registering appropriate runners. We found that macOS offerings on Amazon Web Services (AWS) Elastic Cloud Compute (EC2) fit all our requirements. We also chose to use macOS machines for Android builds.

With this in mind, we'll now explore how we setup our CI/CD solution.

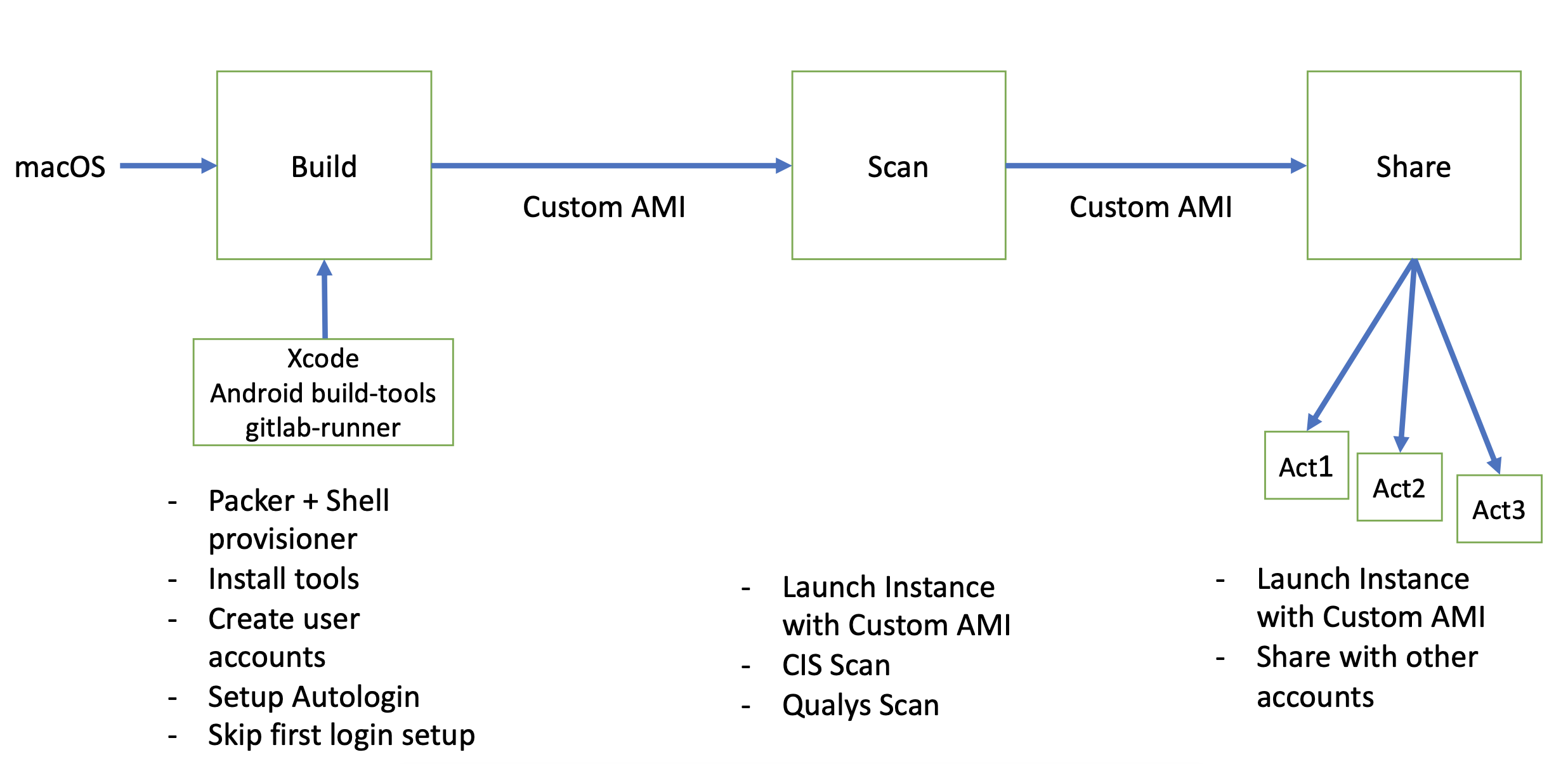

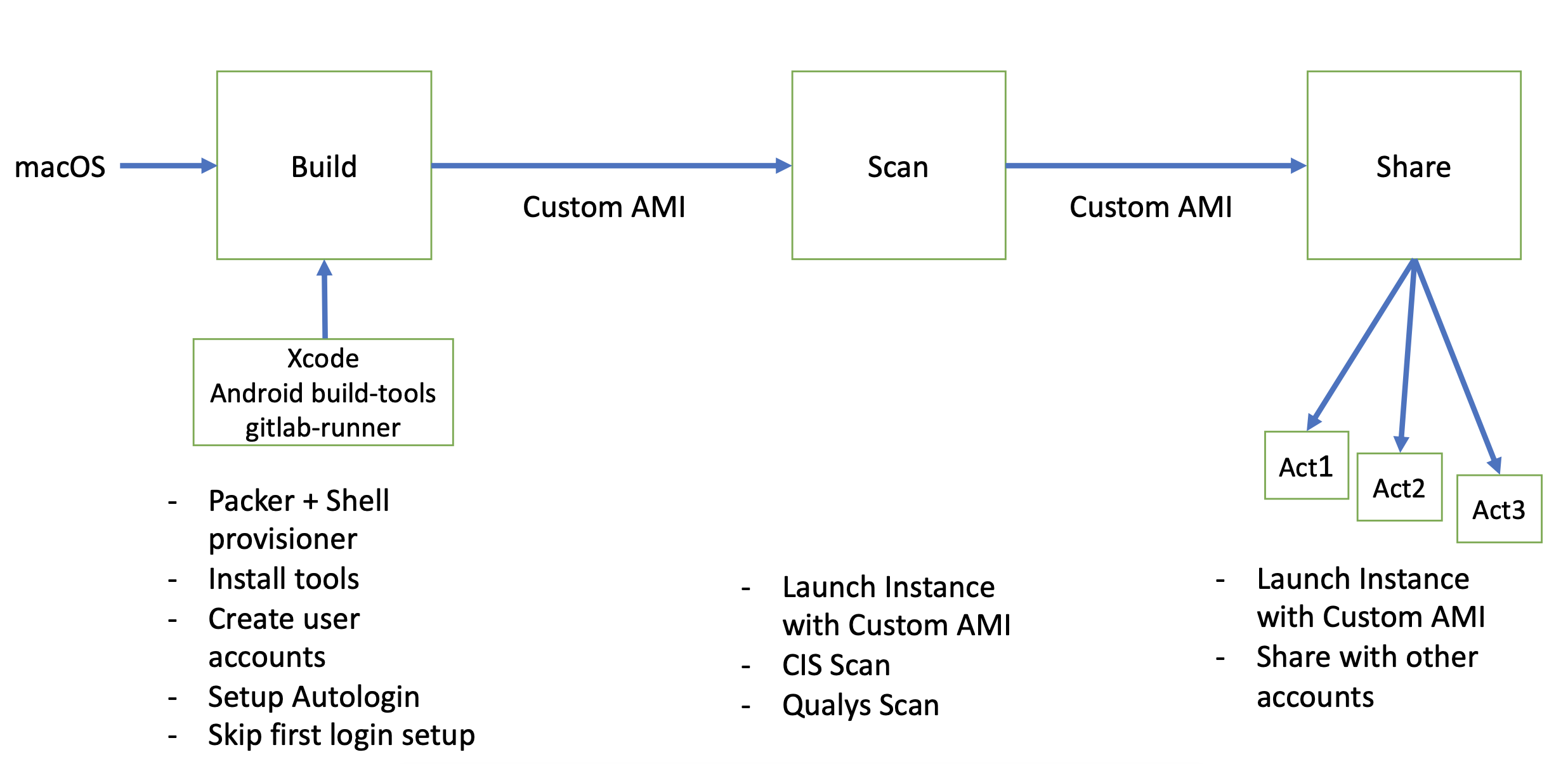

Building the AMI

Amazon Machine Image (AMI) provides the information required to launch an EC2 instance. This includes a snapshot of all software and configuration required on any instance. We started with the base macOS Catalina and Big Sur AMIs provided by AWS and then customized them with:

- iOS and Android build toolchain.

- Additional tools: SwiftLint, fastlane.

- Monitoring software: Prometheus.

- Connect to GitLab platform control plane: GitLab Runner.

- Unprivileged user account named gitlab to be automatically logged in and launch the gitlab-runner via LaunchAgent.

- Secure default settings as recommended by our security team, based on CIS Benchmarks.

We created a customized AMI using Packer and shell provisioners. We then spawned an instance of this AMI to run CIS-CAT Pro and Qualys scans which verifies that system settings are configured as recommended by our security team. The scanned AMI is then distributed to the AWS account that we used to setup the CI/CD infrastructure.

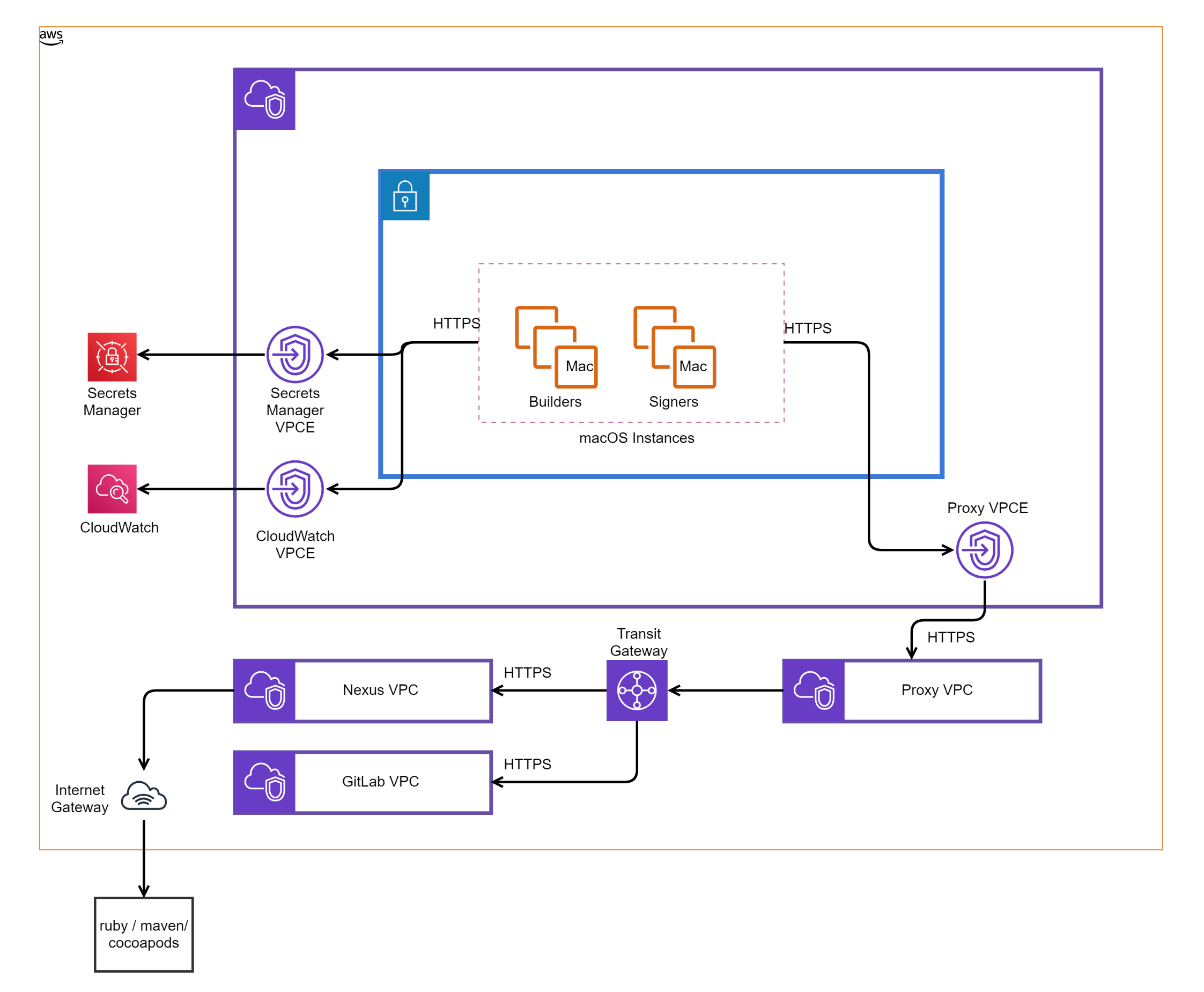

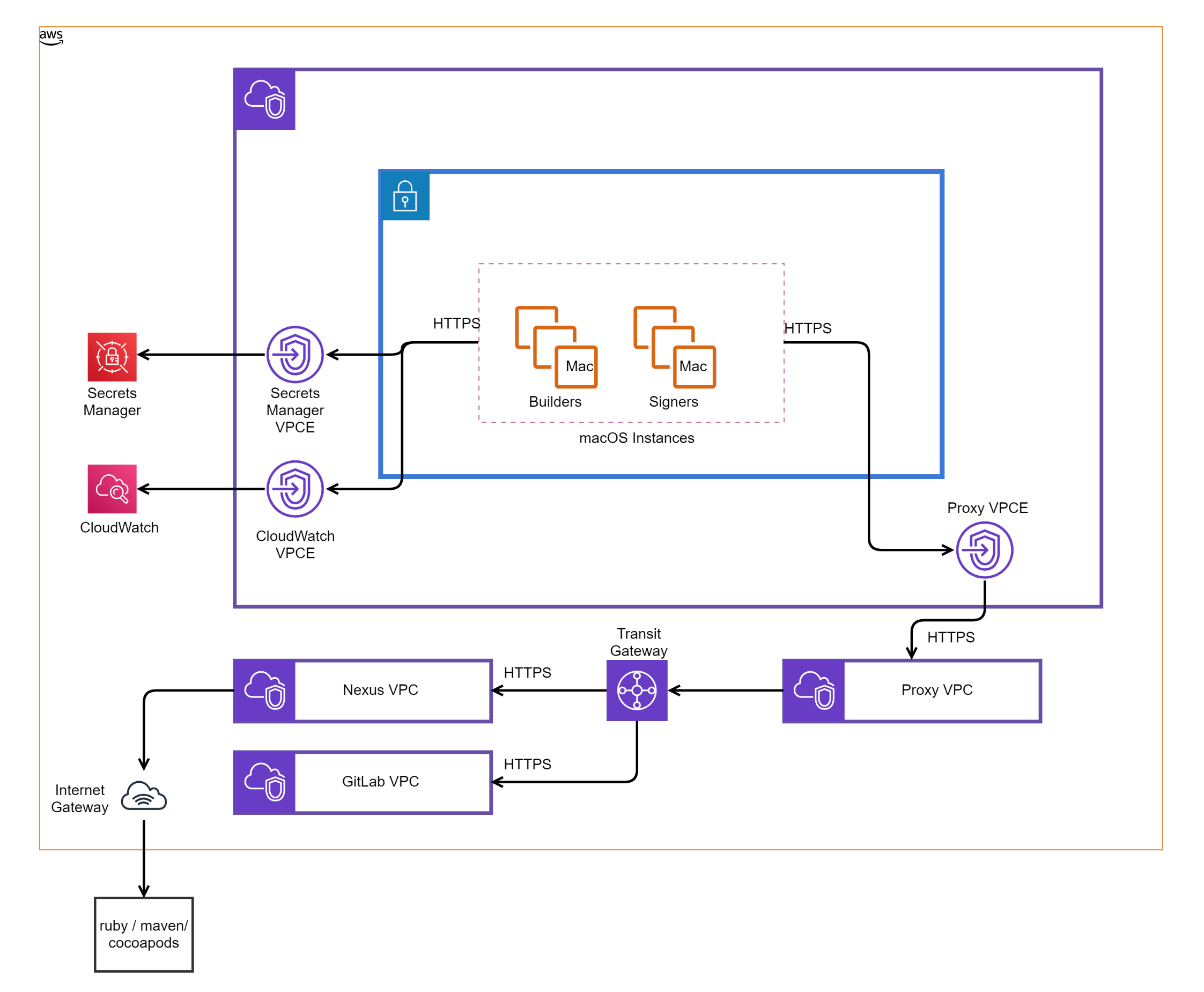

Setting Up Infrastructure

We use Terraform to define our Infrastructure as Code (IaC). Our Terraform configuration defines the network topology, macOS instances, and connectivity to other services. These definitions are transformed to infrastructure within AWS by an automated pipeline. During this process macOS instances are programmatically provisioned within our AWS account and launched with the customized AMI, eliminating the manual steps required to procure and provision Macs.

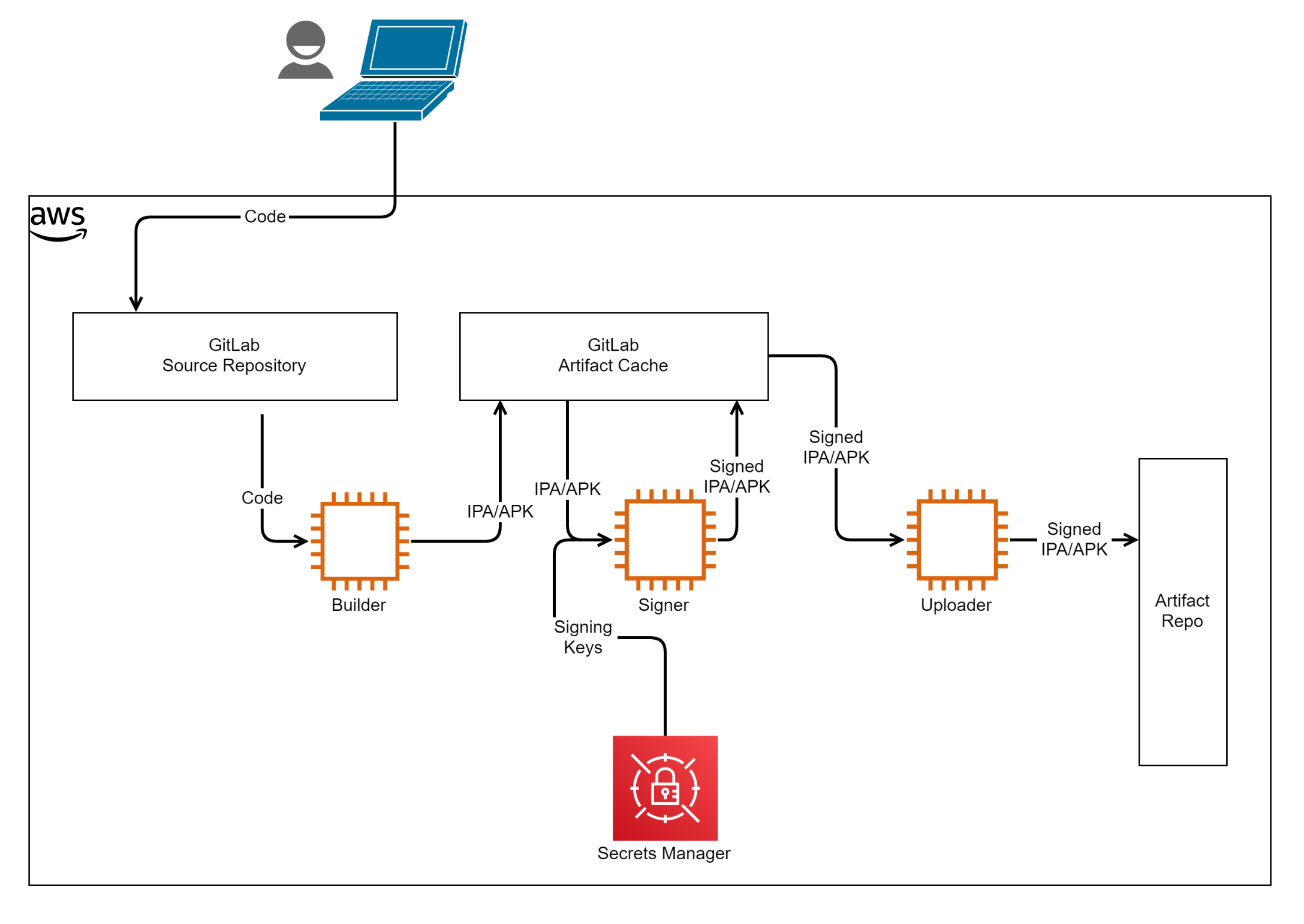

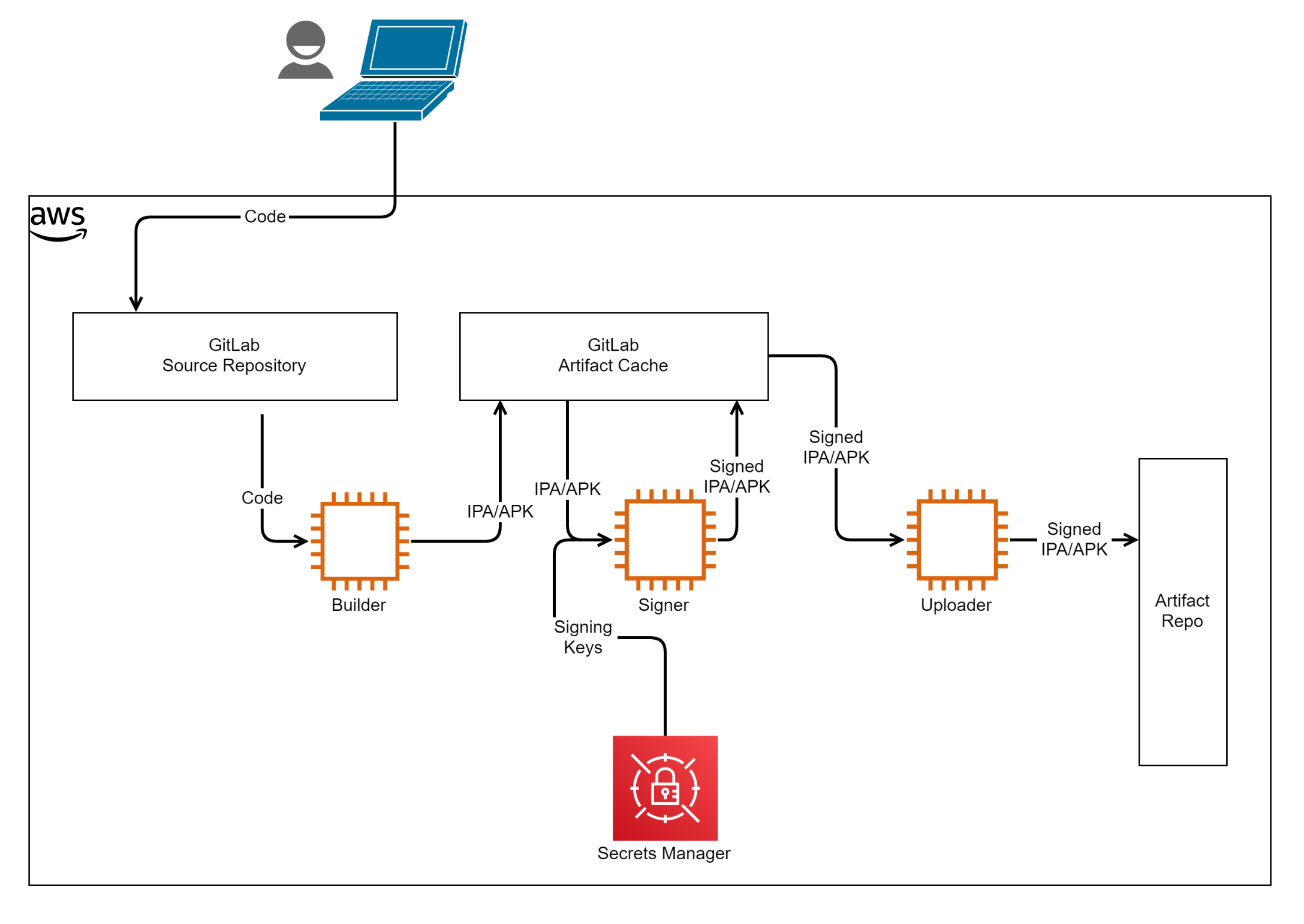

We have set up automated deployments of development, staging, and production environments of the CI/CD system. Each environment is deployed in its own private subnet without direct Internet access. VPC endpoints are configured to allow access to GitLab, Nexus and other AWS services. Here is a high-level overview of our infrastructure:

Nexus scans for a compatible license and known vulnerabilities in vendor-provided and open-source dependencies. Nexus also caches the artifacts, which speeds up subsequent fetches and also provides resilience in case the external repository is down.

Builders and Signers have their own IAM instance profiles which control access to elements in the Secrets Manager. Specifically, only Signers have access to distribution signing keys. The launch sequence of a macOS instance is handled by AWS-provided ec2-macos-init. The final stage of initialization executes a user-provided script referred to as user-data. We use inline scripts in user-data for some initial setup, and fetch scripts stored in source control to perform some tasks when a new Builder/Signer is provisioned. These tasks include:

- Installing custom root certificates.

- Installing fastlane.

- Configuring sudoers file.

- Retrieving gitlab-runner token from Secrets Manager.

- Registering gitlab-runner with tags based on installed versions of Xcode and Android build-tools.

Note: User-data is executed as root. Some scripts create files and directories that will be accessed by accounts with lower privilege and ensure to set the right ownership and permission.

Once setup steps in, user-data is completed successfully, and the gitlab account is logged into, and gitlab-runner, which is started by LaunchAgent, begins polling for any available jobs.

Workflow

Now we'll explore how we set up an environment for every build. We'll also explore additional details on building and signing the mobile application.

Sandboxing

A build in a CI/CD system has to run in a clean environment, with a version of the operating system and build toolchain specified in the build configuration. A clean environment means that a copy of the code to be built is checked out from version control, and any build time dependencies are set up each and every time. Any artifacts from a previous run of the same or different application build must not be available. This is to ensure any test failures can be attributed to changes in code rather than the build environment. Usually, containers like Docker or virtual machines are used to provide such ephemeral build environments. iOS builds cannot be containerized within Docker. We also do not use virtual machines in order to keep things simple. We use the custom executor provided by the gitlab-runner to provide a sandbox for each build. An executor has four stages:

- configure - Provide configuration variables for the build.

- prepare - Set up the environment for the build.

- run - Fetch source code, build, download artifact cache, etc.

- cleanup - Tear down the build environment setup in the prepare stage.

Each stage in a custom executor maps to an executable or a shell script that is launched by gitlab-runner. Recall that we have set up our AMI to launch gitlab-runner as a user account called gitlab, and each of the above stages would be executed as user gitlab. This results in the build being able to store artifacts in any part of the filesystem the gitlab user has write access to, including the home folder. This means that the home folder for gitlab has to be cleared before and after each build to provide a clean build environment. This isn't possible, as we need gitlab-runner to be running and certain configuration files to be retained in the home folder. To solve this, we created another user account named cibuilder and arranged for all commands during the run stage to be executed as this user. cibuilder is restricted to have write access only within its home folder. Configuration, preparation, and cleanup run as user gitlab, and are configured to terminate all jobs running as cibuilder and recreate the home folder, effectively sandboxing the build.

Building the App

We use fastlane to drive the build process. Signing certificate, provision profile, and keystore required for a build are set up using fastlane actions. Application teams use a YAML file to specify:

- Project/workspace, scheme, configuration, etc. for iOS projects.

- Task, flavor, and buildType for Android projects.

A set of scripts managed by our team generate a Fastfile based on this YAML and kick off the build. Only development-signing elements are made available during this phase. As described earlier, configure, prepare, and cleanup stages of the custom executor run as gitlab and run stage as cibuilder, restricting access for application-provided scripts to cibuilder's home folder. Application packages, test results, and log files are uploaded to the GitLab artifact cache at the end of each build.

Signing the App

The iOS application package generated by the build phase is signed using the Development Certificate, hence can only run on a few devices within the development teams. The Android application package is signed with a key scoped only for the builders, and is not eligible for upload to Google Play Store. We use fastlane action resign to re-sign artifacts produced by the build phase to prepare them for distributions on the respective app stores. We follow the build and re-sign approach to limit the distribution signing keys to only a few hosts that are configured to only run scripts provided by the Goldman Sachs Mobile CI/CD team. The custom executor for gitlab-runner on the hosts used to re-sign is configured with a run stage that allows only these sub-stages:

- prepare_script

- get_sources

- restore_cache

- download_artifacts

- archive_cache

- upload_artifacts_on_success

- cleanup_file_variables

The after_script stage is skipped and the build_script sub-stage is overridden to re-sign the artifact downloaded by the download_artifacts sub-stage. See the description for custom executor's run stage to learn more about each of the sub-stages above. The scripts used to re-sign store artifacts are in well-known locations and can be cleaned up without having to erase the home folder. Hence we don't need the cibuilder account leading to configure, prepare, run, and cleanup running as gitlab.

Further down in the build pipeline, uploaders transfer the signed artifacts from GitLab cache to the artifact repository for long-term storage.

Conclusion

We went from a system that was set up manually, that took significant time to procure and provision the hardware, that was difficult to upgrade hardware or software, and that required coordination of multiple teams, to a setup that is streamlined, centralized, and automated. We maintained the security model of limiting the distribution keys to only a few designated hosts, and provided simple yet effective sandboxing for builds. The new system was set up in a matter of months and was only possible due to well-accepted processes and tools associated with AWS EC2.

We are actively working on completing the continuous delivery part of the pipeline, and have many more exciting plans for macOS on EC2. We are also hiring for several exciting roles.

See https://www.gs.com/disclaimer/global_email for important risk disclosures, conflicts of interest, and other terms and conditions relating to this blog and your reliance on information contained in it.

Solutions

Curated Data Security MasterData AnalyticsPlotTool ProPortfolio AnalyticsGS QuantTransaction BankingGS DAP®Liquidity Investing¹ Real-time data can be impacted by planned system maintenance, connectivity or availability issues stemming from related third-party service providers, or other intermittent or unplanned technology issues.

Transaction Banking services are offered by Goldman Sachs Bank USA ("GS Bank") and its affiliates. GS Bank is a New York State chartered bank, a member of the Federal Reserve System and a Member FDIC. For additional information, please see Bank Regulatory Information.

² Source: Goldman Sachs Asset Management, as of March 31, 2025.

Mosaic is a service mark of Goldman Sachs & Co. LLC. This service is made available in the United States by Goldman Sachs & Co. LLC and outside of the United States by Goldman Sachs International, or its local affiliates in accordance with applicable law and regulations. Goldman Sachs International and Goldman Sachs & Co. LLC are the distributors of the Goldman Sachs Funds. Depending upon the jurisdiction in which you are located, transactions in non-Goldman Sachs money market funds are affected by either Goldman Sachs & Co. LLC, a member of FINRA, SIPC and NYSE, or Goldman Sachs International. For additional information contact your Goldman Sachs representative. Goldman Sachs & Co. LLC, Goldman Sachs International, Goldman Sachs Liquidity Solutions, Goldman Sachs Asset Management, L.P., and the Goldman Sachs funds available through Goldman Sachs Liquidity Solutions and other affiliated entities, are under the common control of the Goldman Sachs Group, Inc.

Goldman Sachs & Co. LLC is a registered U.S. broker-dealer and futures commission merchant, and is subject to regulatory capital requirements including those imposed by the SEC, the U.S. Commodity Futures Trading Commission (CFTC), the Chicago Mercantile Exchange, the Financial Industry Regulatory Authority, Inc. and the National Futures Association.

FOR INSTITUTIONAL USE ONLY - NOT FOR USE AND/OR DISTRIBUTION TO RETAIL AND THE GENERAL PUBLIC.

This material is for informational purposes only. It is not an offer or solicitation to buy or sell any securities.

THIS MATERIAL DOES NOT CONSTITUTE AN OFFER OR SOLICITATION IN ANY JURISDICTION WHERE OR TO ANY PERSON TO WHOM IT WOULD BE UNAUTHORIZED OR UNLAWFUL TO DO SO. Prospective investors should inform themselves as to any applicable legal requirements and taxation and exchange control regulations in the countries of their citizenship, residence or domicile which might be relevant. This material is provided for informational purposes only and should not be construed as investment advice or an offer or solicitation to buy or sell securities. This material is not intended to be used as a general guide to investing, or as a source of any specific investment recommendations, and makes no implied or express recommendations concerning the manner in which any client's account should or would be handled, as appropriate investment strategies depend upon the client's investment objectives.

United Kingdom: In the United Kingdom, this material is a financial promotion and has been approved by Goldman Sachs Asset Management International, which is authorized and regulated in the United Kingdom by the Financial Conduct Authority.

European Economic Area (EEA): This marketing communication is disseminated by Goldman Sachs Asset Management B.V., including through its branches ("GSAM BV"). GSAM BV is authorised and regulated by the Dutch Authority for the Financial Markets (Autoriteit Financiële Markten, Vijzelgracht 50, 1017 HS Amsterdam, The Netherlands) as an alternative investment fund manager ("AIFM") as well as a manager of undertakings for collective investment in transferable securities ("UCITS"). Under its licence as an AIFM, the Manager is authorized to provide the investment services of (i) reception and transmission of orders in financial instruments; (ii) portfolio management; and (iii) investment advice. Under its licence as a manager of UCITS, the Manager is authorized to provide the investment services of (i) portfolio management; and (ii) investment advice.

Information about investor rights and collective redress mechanisms are available on www.gsam.com/responsible-investing (section Policies & Governance). Capital is at risk. Any claims arising out of or in connection with the terms and conditions of this disclaimer are governed by Dutch law.

To the extent it relates to custody activities, this financial promotion is disseminated by Goldman Sachs Bank Europe SE ("GSBE"), including through its authorised branches. GSBE is a credit institution incorporated in Germany and, within the Single Supervisory Mechanism established between those Member States of the European Union whose official currency is the Euro, subject to direct prudential supervision by the European Central Bank (Sonnemannstrasse 20, 60314 Frankfurt am Main, Germany) and in other respects supervised by German Federal Financial Supervisory Authority (Bundesanstalt für Finanzdienstleistungsaufsicht, BaFin) (Graurheindorfer Straße 108, 53117 Bonn, Germany; website: www.bafin.de) and Deutsche Bundesbank (Hauptverwaltung Frankfurt, Taunusanlage 5, 60329 Frankfurt am Main, Germany).

Switzerland: For Qualified Investor use only - Not for distribution to general public. This is marketing material. This document is provided to you by Goldman Sachs Bank AG, Zürich. Any future contractual relationships will be entered into with affiliates of Goldman Sachs Bank AG, which are domiciled outside of Switzerland. We would like to remind you that foreign (Non-Swiss) legal and regulatory systems may not provide the same level of protection in relation to client confidentiality and data protection as offered to you by Swiss law.

Asia excluding Japan: Please note that neither Goldman Sachs Asset Management (Hong Kong) Limited ("GSAMHK") or Goldman Sachs Asset Management (Singapore) Pte. Ltd. (Company Number: 201329851H ) ("GSAMS") nor any other entities involved in the Goldman Sachs Asset Management business that provide this material and information maintain any licenses, authorizations or registrations in Asia (other than Japan), except that it conducts businesses (subject to applicable local regulations) in and from the following jurisdictions: Hong Kong, Singapore, India and China. This material has been issued for use in or from Hong Kong by Goldman Sachs Asset Management (Hong Kong) Limited and in or from Singapore by Goldman Sachs Asset Management (Singapore) Pte. Ltd. (Company Number: 201329851H).

Australia: This material is distributed by Goldman Sachs Asset Management Australia Pty Ltd ABN 41 006 099 681, AFSL 228948 (‘GSAMA’) and is intended for viewing only by wholesale clients for the purposes of section 761G of the Corporations Act 2001 (Cth). This document may not be distributed to retail clients in Australia (as that term is defined in the Corporations Act 2001 (Cth)) or to the general public. This document may not be reproduced or distributed to any person without the prior consent of GSAMA. To the extent that this document contains any statement which may be considered to be financial product advice in Australia under the Corporations Act 2001 (Cth), that advice is intended to be given to the intended recipient of this document only, being a wholesale client for the purposes of the Corporations Act 2001 (Cth). Any advice provided in this document is provided by either of the following entities. They are exempt from the requirement to hold an Australian financial services licence under the Corporations Act of Australia and therefore do not hold any Australian Financial Services Licences, and are regulated under their respective laws applicable to their jurisdictions, which differ from Australian laws. Any financial services given to any person by these entities by distributing this document in Australia are provided to such persons pursuant to the respective ASIC Class Orders and ASIC Instrument mentioned below.

- Goldman Sachs Asset Management, LP (GSAMLP), Goldman Sachs & Co. LLC (GSCo), pursuant ASIC Class Order 03/1100; regulated by the US Securities and Exchange Commission under US laws.

- Goldman Sachs Asset Management International (GSAMI), Goldman Sachs International (GSI), pursuant to ASIC Class Order 03/1099; regulated by the Financial Conduct Authority; GSI is also authorized by the Prudential Regulation Authority, and both entities are under UK laws.

- Goldman Sachs Asset Management (Singapore) Pte. Ltd. (GSAMS), pursuant to ASIC Class Order 03/1102; regulated by the Monetary Authority of Singapore under Singaporean laws

- Goldman Sachs Asset Management (Hong Kong) Limited (GSAMHK), pursuant to ASIC Class Order 03/1103 and Goldman Sachs (Asia) LLC (GSALLC), pursuant to ASIC Instrument 04/0250; regulated by the Securities and Futures Commission of Hong Kong under Hong Kong laws

No offer to acquire any interest in a fund or a financial product is being made to you in this document. If the interests or financial products do become available in the future, the offer may be arranged by GSAMA in accordance with section 911A(2)(b) of the Corporations Act. GSAMA holds Australian Financial Services Licence No. 228948. Any offer will only be made in circumstances where disclosure is not required under Part 6D.2 of the Corporations Act or a product disclosure statement is not required to be given under Part 7.9 of the Corporations Act (as relevant).

FOR DISTRIBUTION ONLY TO FINANCIAL INSTITUTIONS, FINANCIAL SERVICES LICENSEES AND THEIR ADVISERS. NOT FOR VIEWING BY RETAIL CLIENTS OR MEMBERS OF THE GENERAL PUBLIC

Canada: This presentation has been communicated in Canada by GSAM LP, which is registered as a portfolio manager under securities legislation in all provinces of Canada and as a commodity trading manager under the commodity futures legislation of Ontario and as a derivatives adviser under the derivatives legislation of Quebec. GSAM LP is not registered to provide investment advisory or portfolio management services in respect of exchange-traded futures or options contracts in Manitoba and is not offering to provide such investment advisory or portfolio management services in Manitoba by delivery of this material.

Japan: This material has been issued or approved in Japan for the use of professional investors defined in Article 2 paragraph (31) of the Financial Instruments and Exchange Law ("FIEL"). Also, any description regarding investment strategies on or funds as collective investment scheme under Article 2 paragraph (2) item 5 or item 6 of FIEL has been approved only for Qualified Institutional Investors defined in Article 10 of Cabinet Office Ordinance of Definitions under Article 2 of FIEL.

Interest Rate Benchmark Transition Risks: This transaction may require payments or calculations to be made by reference to a benchmark rate ("Benchmark"), which will likely soon stop being published and be replaced by an alternative rate, or will be subject to substantial reform. These changes could have unpredictable and material consequences to the value, price, cost and/or performance of this transaction in the future and create material economic mismatches if you are using this transaction for hedging or similar purposes. Goldman Sachs may also have rights to exercise discretion to determine a replacement rate for the Benchmark for this transaction, including any price or other adjustments to account for differences between the replacement rate and the Benchmark, and the replacement rate and any adjustments we select may be inconsistent with, or contrary to, your interests or positions. Other material risks related to Benchmark reform can be found at https://www.gs.com/interest-rate-benchmark-transition-notice. Goldman Sachs cannot provide any assurances as to the materialization, consequences, or likely costs or expenses associated with any of the changes or risks arising from Benchmark reform, though they may be material. You are encouraged to seek independent legal, financial, tax, accounting, regulatory, or other appropriate advice on how changes to the Benchmark could impact this transaction.

Confidentiality: No part of this material may, without GSAM's prior written consent, be (i) copied, photocopied or duplicated in any form, by any means, or (ii) distributed to any person that is not an employee, officer, director, or authorized agent of the recipient.

GSAM Services Private Limited (formerly Goldman Sachs Asset Management (India) Private Limited) acts as the Investment Advisor, providing non-binding non-discretionary investment advice to dedicated offshore mandates, involving Indian and overseas securities, managed by GSAM entities based outside India. Members of the India team do not participate in the investment decision making process.